Despite the increasing prominence of artificial intelligence (AI), many educators have continued to resist the integration of the technology into their classrooms. The concern of academic dishonesty is one of the most common arguments against the usage of AI in education. But while plenty of students use AI to cheat, others use it productively as a way to aid their education without undermining the learning process.

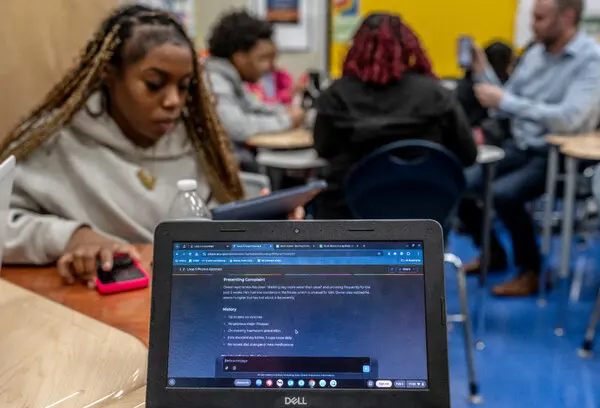

Some of the primary uses of AI in the school environment are related to studying. AI can summarize information and highlight important things students need to know. This can be especially useful in Advanced Placement (AP) classes, where there is a set curriculum available online, so it is easier for AI tools to understand the complete educational context. “I’ve used it… to make [AP US History] flashcards about important key terms,” said Kylie Lee, TL junior.

Many education technology companies have invested in their own artificial intelligence software specifically catered toward studying. Quizlet, a company that offers digital learning tools, allows students to upload notes, and the AI software will automatically generate study guides, flashcards, and more.

Another common usage of AI by students is for research purposes. AI chatbots can summarize or enumerate sources, and there are many tools specifically designed for academic research. For example, Undermind, an AI-powered research platform, pairs users with an AI assistant that reviews scientific literature related to a given topic. The website can sort through thousands of papers to find the most relevant information.

Further, AI can serve as a personalized tutor for students struggling with a specific topic. “I’ve used ChatGPT to help me with science,” said Sierra Powell ‘27, “to explain formulas and how they would work, and to be able to use them more easily.”

Many students also use artificial intelligence to proofread or edit written work. This can be an effective way to use AI for help with writing while still preserving student learning. During the 2024–2025 school year, TL English teacher Mackenzie Bedford had her English 10 students use Khanmigo, an AI-powered educational tool from Khan Academy, to assist in revising essays. The use of Khanmigo allowed students to receive personalized feedback and learn how to edit academic work.

While there are many effective ways to use AI to aid learning, the use of the technology by some students for dishonest purposes has given the technology a bad reputation in education. According to research conducted by College Board about attitudes surrounding AI in higher education, “92% of faculty are concerned about plagiarism or dishonesty facilitated by AI.”

“When people hear AI and using it for homework they associate that more negatively,” said Lee.

The stereotype that AI is primarily used for cheating has generated friction in the relationship between students and teachers. Many teachers feel like they cannot trust their students. “I want to believe the best about my kids, but AI has complicated this,” wrote Lauren Boulanger, Massachusetts high school English teacher, in an Education Week opinion piece. “Distrust creates a barrier between me and my students that feels foreign.”

Simultaneously, many students feel that their teachers trust error-prone AI-detectors more than kids themselves, causing them to feel defensive and anticipate cheating accusations even if they are completing work honestly.

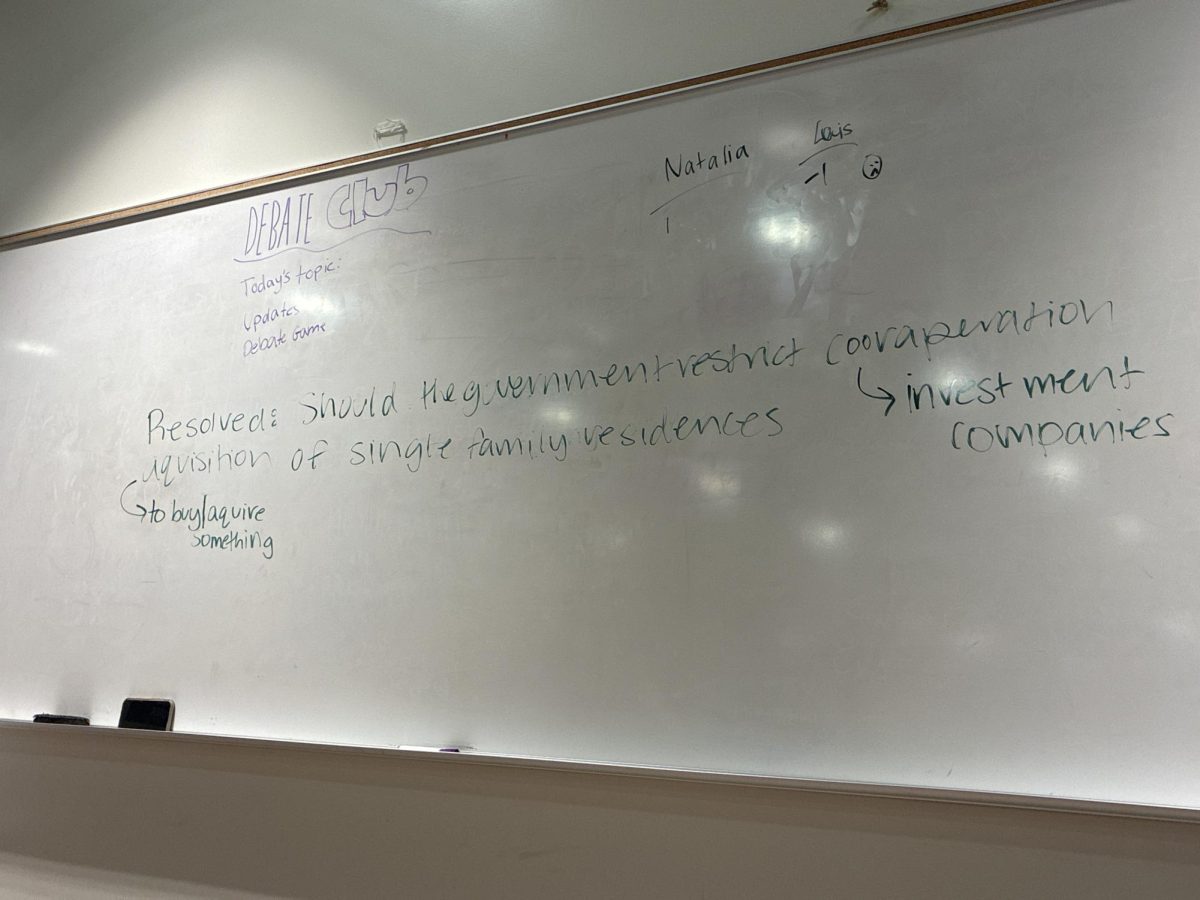

A main contributor to the eroding relationship between teachers and students is not necessarily artificial intelligence itself, but the lack of transparency and communication surrounding it in schools. Teachers often do not set clear guidelines for AI usage on assignments, pushing many students to think that all usage—even that which is not detrimental to learning—is off limits. But because many students are afraid that the mere mention of AI in the classroom will lead to accusations of academic dishonesty, they often refrain from asking their teacher questions surrounding the parameters of AI.

Teachers’ suspicion of students is not unwarranted—many students do use AI to cheat on assignments and assessments—but neither is students’ mistrust of their educators. Transparency and mutual understanding from both parties seem to be necessary for preserving student-teacher relations in the age of AI. While teachers can stand to further clarify rules and recognize the effective aspects of students using AI, students can be more understanding of distrust from their teachers and respectful of any guidelines put in place.